mirror of

https://github.com/kohya-ss/sd-scripts.git

synced 2026-04-06 21:52:27 +00:00

Compare commits

309 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

08ae46b163 | ||

|

|

4e5db58a71 | ||

|

|

a9d29ac78c | ||

|

|

5c065eee79 | ||

|

|

048e7cd428 | ||

|

|

a76ad2d1d5 | ||

|

|

9d0f9736bf | ||

|

|

00bb8a65a6 | ||

|

|

dac2bd163a | ||

|

|

78d1fb5ce6 | ||

|

|

14d7b24619 | ||

|

|

3bc0d83769 | ||

|

|

ffdfd5f615 | ||

|

|

d01d953262 | ||

|

|

914d1505df | ||

|

|

496c8cdc09 | ||

|

|

82713e9aa6 | ||

|

|

e067d64b53 | ||

|

|

3d400667d2 | ||

|

|

2aef2872fb | ||

|

|

43c0a69843 | ||

|

|

8aed5125de | ||

|

|

e0f007f2a9 | ||

|

|

3c29784825 | ||

|

|

8f1e930bf4 | ||

|

|

f771396e90 | ||

|

|

f67b3f4452 | ||

|

|

21f5b618c3 | ||

|

|

5471b0deb0 | ||

|

|

2b1a3080e7 | ||

|

|

92a1af8024 | ||

|

|

b35b053b8d | ||

|

|

55521eece0 | ||

|

|

b32abdd327 | ||

|

|

d1ecfde487 | ||

|

|

04ad46a9a7 | ||

|

|

4c561411aa | ||

|

|

43a41c6c43 | ||

|

|

5367daa210 | ||

|

|

b825e4602c | ||

|

|

188e54b760 | ||

|

|

2c5f5c324a | ||

|

|

5777be5208 | ||

|

|

e727a0d222 | ||

|

|

cdd8882a01 | ||

|

|

3f3502fb57 | ||

|

|

20c00603a8 | ||

|

|

9239fefa52 | ||

|

|

53d60543e5 | ||

|

|

22e3aca89c | ||

|

|

8d86f58174 | ||

|

|

e5cc64a563 | ||

|

|

c7406d6b27 | ||

|

|

d2da3c4236 | ||

|

|

2bad87f2f6 | ||

|

|

ed62e566bb | ||

|

|

51b3dc2c11 | ||

|

|

74f4a8fab9 | ||

|

|

a75baf9143 | ||

|

|

b03721b4d9 | ||

|

|

6b790bace6 | ||

|

|

dcaecfd20b | ||

|

|

553ac4aa1b | ||

|

|

f0c8c95871 | ||

|

|

c2e1d4b71b | ||

|

|

3a72e6f003 | ||

|

|

f7b5abb595 | ||

|

|

b8ad17902f | ||

|

|

9a9ac79edf | ||

|

|

6473aa1dd7 | ||

|

|

b599adc938 | ||

|

|

5e96e1369d | ||

|

|

c0be52a773 | ||

|

|

fb312acb7f | ||

|

|

938bd71844 | ||

|

|

b3020db63f | ||

|

|

e42b2f7aa9 | ||

|

|

f9478f0d47 | ||

|

|

4fc9f1f8c5 | ||

|

|

5a3d1a57b6 | ||

|

|

7db98baa86 | ||

|

|

d591891048 | ||

|

|

3a93d18bb5 | ||

|

|

7511674333 | ||

|

|

883bd1269c | ||

|

|

2aa27b7a4b | ||

|

|

5ea5fefcd2 | ||

|

|

6a79ac6a03 | ||

|

|

ea2dfd09ef | ||

|

|

7380801dfc | ||

|

|

ae33d72479 | ||

|

|

19c2752e87 | ||

|

|

d80af9c17b | ||

|

|

fb230aff1b | ||

|

|

8cbd3f4fca | ||

|

|

b18db9fbbd | ||

|

|

b1635f4bf6 | ||

|

|

44013fe0ef | ||

|

|

9fd7fb813d | ||

|

|

89a9d3a92c | ||

|

|

9682772b09 | ||

|

|

b18a09edb5 | ||

|

|

c086e85d17 | ||

|

|

26efa88908 | ||

|

|

1bec2bfe07 | ||

|

|

76f53429be | ||

|

|

73d612ff9c | ||

|

|

58a809eaff | ||

|

|

93134cdd15 | ||

|

|

b7e7ee387a | ||

|

|

57d8483eaf | ||

|

|

949ee6fcc9 | ||

|

|

26a81d075c | ||

|

|

8c3a52ecc9 | ||

|

|

86f4e20337 | ||

|

|

9abbee0632 | ||

|

|

74eba06d13 | ||

|

|

4e1acc62f9 | ||

|

|

c20745b6e8 | ||

|

|

4cabb37977 | ||

|

|

86eba1d2cf | ||

|

|

05940940c0 | ||

|

|

6bbb4d426e | ||

|

|

7817e95a86 | ||

|

|

443ce7a30b | ||

|

|

ed2e431950 | ||

|

|

0fef7b4684 | ||

|

|

67e698af67 | ||

|

|

7c35aee042 | ||

|

|

481823796e | ||

|

|

835b0d54cd | ||

|

|

505768ea86 | ||

|

|

1614d30d1b | ||

|

|

25566182a8 | ||

|

|

6dffc88b44 | ||

|

|

64d5ceda71 | ||

|

|

e8806f29dc | ||

|

|

2ce9ad235c | ||

|

|

3fb12e41b7 | ||

|

|

591e3c1813 | ||

|

|

b5ba463512 | ||

|

|

e0d7f1d99d | ||

|

|

a68501bede | ||

|

|

c425afb08b | ||

|

|

46029b2707 | ||

|

|

02acae8e1d | ||

|

|

91a50ea637 | ||

|

|

9f644d8dc3 | ||

|

|

36dc97c841 | ||

|

|

e6bad080cb | ||

|

|

7f17237ada | ||

|

|

ebd3ea380c | ||

|

|

bf3a13bb4e | ||

|

|

1a170c4762 | ||

|

|

552cdbd6d8 | ||

|

|

a86514f1ad | ||

|

|

2e8a3d20dd | ||

|

|

66051883fb | ||

|

|

f7fbdc4b2a | ||

|

|

00f1296537 | ||

|

|

ebdb624d29 | ||

|

|

93df55d597 | ||

|

|

56bc806d52 | ||

|

|

25f8ac731f | ||

|

|

4ba1667978 | ||

|

|

0ca064287e | ||

|

|

a3171714ce | ||

|

|

4a1668fe37 | ||

|

|

4eb356f165 | ||

|

|

a7218574f2 | ||

|

|

ddfe94b33b | ||

|

|

8746188ed7 | ||

|

|

1bfcf164f1 | ||

|

|

d3bc5a1413 | ||

|

|

6e279730cf | ||

|

|

5e817e4343 | ||

|

|

b4636d4185 | ||

|

|

22ee0ac467 | ||

|

|

17089b1287 | ||

|

|

7ee808d5d7 | ||

|

|

9ff26af68b | ||

|

|

7dbcef745a | ||

|

|

cae42728ab | ||

|

|

50f65d683d | ||

|

|

0fc1cc8076 | ||

|

|

943eae1211 | ||

|

|

4c928c8d12 | ||

|

|

687044519b | ||

|

|

758323532b | ||

|

|

8bd844cdc1 | ||

|

|

4d4ebf600e | ||

|

|

e6a8c9d269 | ||

|

|

da48f74e7b | ||

|

|

e5d9f483f0 | ||

|

|

303c3410e2 | ||

|

|

de1dde1a06 | ||

|

|

3eb8fb1875 | ||

|

|

fda66db0d8 | ||

|

|

3815b82bef | ||

|

|

37fbefb3cd | ||

|

|

c6e28faa57 | ||

|

|

a888223869 | ||

|

|

d30ea7966d | ||

|

|

df9cb2f11c | ||

|

|

8544e219b0 | ||

|

|

186a2665ad | ||

|

|

f2f2ce0d7d | ||

|

|

c9fda104b4 | ||

|

|

aa40cb9345 | ||

|

|

b8734405c6 | ||

|

|

c2c1261b43 | ||

|

|

48110bcb23 | ||

|

|

60e5793d5e | ||

|

|

98b0cf0b3d | ||

|

|

88515c2985 | ||

|

|

89f5b3b8e6 | ||

|

|

61ec60a893 | ||

|

|

199a3cbae4 | ||

|

|

74eb43190e | ||

|

|

5851b2b773 | ||

|

|

e4695e9359 | ||

|

|

dfeadf9e52 | ||

|

|

b3d3f0c8ac | ||

|

|

4fe1dd6a1c | ||

|

|

95ee349e2a | ||

|

|

a75fd3964a | ||

|

|

29c9008e07 | ||

|

|

bf691aef69 | ||

|

|

807bdf9cc9 | ||

|

|

eba142ccb2 | ||

|

|

c1b14fcdd6 | ||

|

|

9fd91d26a3 | ||

|

|

9622082eb8 | ||

|

|

e4f9b2b715 | ||

|

|

895a599d34 | ||

|

|

58d24ba254 | ||

|

|

974674242e | ||

|

|

de37fd9906 | ||

|

|

0c4423d9dc | ||

|

|

2e4ce0fdff | ||

|

|

f981dfd38a | ||

|

|

a84ca297bd | ||

|

|

673f9ced47 | ||

|

|

c5aae65003 | ||

|

|

d8da85b38b | ||

|

|

c4bc435bc4 | ||

|

|

4a7b814700 | ||

|

|

223640e1ae | ||

|

|

fbaf373c8a | ||

|

|

6b62c44022 | ||

|

|

1945fa186d | ||

|

|

82e585cf01 | ||

|

|

80af4c0c42 | ||

|

|

9f1d3aca24 | ||

|

|

2efced0a9a | ||

|

|

40d1bf3809 | ||

|

|

4735b21318 | ||

|

|

fac1813ac0 | ||

|

|

cbfe8126d6 | ||

|

|

54928fac7b | ||

|

|

39a0293800 | ||

|

|

4dd22f4dc8 | ||

|

|

1b222dbf9b | ||

|

|

d62725b644 | ||

|

|

dcd101b3d5 | ||

|

|

f56988b252 | ||

|

|

6d10233a53 | ||

|

|

4c35006731 | ||

|

|

e31177adf3 | ||

|

|

6b522b34c1 | ||

|

|

305bda2928 | ||

|

|

85d8b49129 | ||

|

|

61a61c51ee | ||

|

|

bda0e8333c | ||

|

|

f192338874 | ||

|

|

885fd9ec90 | ||

|

|

0f20453997 | ||

|

|

8215f12c45 | ||

|

|

64de791f2c | ||

|

|

7e51bd837e | ||

|

|

eed13e6cb5 | ||

|

|

7ada935dfc | ||

|

|

a39a3b4a3d | ||

|

|

445b34de1f | ||

|

|

96d695dd83 | ||

|

|

da05ad6339 | ||

|

|

bda3c7f27a | ||

|

|

3800e145bd | ||

|

|

d904bb76c0 | ||

|

|

0a884da984 | ||

|

|

689c8414df | ||

|

|

02b1b2b8fe | ||

|

|

5f6eb13df9 | ||

|

|

0f398fea65 | ||

|

|

4b68913dbe | ||

|

|

890f6d5a9c | ||

|

|

cf7832fbb1 | ||

|

|

dfbecbc4d7 | ||

|

|

600d78ae08 | ||

|

|

504e27af18 | ||

|

|

ca85f18eae | ||

|

|

dadea12ad2 | ||

|

|

e53adbdbcc | ||

|

|

20055752bd | ||

|

|

7cca345345 | ||

|

|

5f7693be04 | ||

|

|

d9bb4aa4f9 | ||

|

|

c5cca931ab | ||

|

|

bedea62ff0 |

21

.github/workflows/typos.yml

vendored

Normal file

21

.github/workflows/typos.yml

vendored

Normal file

@@ -0,0 +1,21 @@

|

||||

---

|

||||

# yamllint disable rule:line-length

|

||||

name: Typos

|

||||

|

||||

on: # yamllint disable-line rule:truthy

|

||||

push:

|

||||

pull_request:

|

||||

types:

|

||||

- opened

|

||||

- synchronize

|

||||

- reopened

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: typos-action

|

||||

uses: crate-ci/typos@v1.13.10

|

||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -1,3 +1,7 @@

|

||||

logs

|

||||

__pycache__

|

||||

wd14_tagger_model

|

||||

venv

|

||||

*.egg-info

|

||||

build

|

||||

.vscode

|

||||

135

README-ja.md

135

README-ja.md

@@ -1,7 +1,7 @@

|

||||

## リポジトリについて

|

||||

Stable Diffusionの学習、画像生成、その他のスクリプトを入れたリポジトリです。

|

||||

|

||||

[README in English](./README.md)

|

||||

[README in English](./README.md) ←更新情報はこちらにあります

|

||||

|

||||

GUIやPowerShellスクリプトなど、より使いやすくする機能が[bmaltais氏のリポジトリ](https://github.com/bmaltais/kohya_ss)で提供されています(英語です)のであわせてご覧ください。bmaltais氏に感謝します。

|

||||

|

||||

@@ -14,10 +14,131 @@ GUIやPowerShellスクリプトなど、より使いやすくする機能が[bma

|

||||

|

||||

## 使用法について

|

||||

|

||||

note.comに記事がありますのでそちらをご覧ください(将来的にはこちらへ移すかもしれません)。

|

||||

当リポジトリ内およびnote.comに記事がありますのでそちらをご覧ください(将来的にはすべてこちらへ移すかもしれません)。

|

||||

|

||||

* [DreamBoothの学習について](./train_db_README-ja.md)

|

||||

* [fine-tuningのガイド](./fine_tune_README_ja.md):

|

||||

BLIPによるキャプショニングと、DeepDanbooruまたはWD14 taggerによるタグ付けを含みます

|

||||

* [LoRAの学習について](./train_network_README-ja.md)

|

||||

* [Textual Inversionの学習について](./train_ti_README-ja.md)

|

||||

* note.com [画像生成スクリプト](https://note.com/kohya_ss/n/n2693183a798e)

|

||||

* note.com [モデル変換スクリプト](https://note.com/kohya_ss/n/n374f316fe4ad)

|

||||

|

||||

## Windowsでの動作に必要なプログラム

|

||||

|

||||

Python 3.10.6およびGitが必要です。

|

||||

|

||||

- Python 3.10.6: https://www.python.org/ftp/python/3.10.6/python-3.10.6-amd64.exe

|

||||

- git: https://git-scm.com/download/win

|

||||

|

||||

PowerShellを使う場合、venvを使えるようにするためには以下の手順でセキュリティ設定を変更してください。

|

||||

(venvに限らずスクリプトの実行が可能になりますので注意してください。)

|

||||

|

||||

- PowerShellを管理者として開きます。

|

||||

- 「Set-ExecutionPolicy Unrestricted」と入力し、Yと答えます。

|

||||

- 管理者のPowerShellを閉じます。

|

||||

|

||||

## Windows環境でのインストール

|

||||

|

||||

以下の例ではPyTorchは1.12.1/CUDA 11.6版をインストールします。CUDA 11.3版やPyTorch 1.13を使う場合は適宜書き換えください。

|

||||

|

||||

(なお、python -m venv~の行で「python」とだけ表示された場合、py -m venv~のようにpythonをpyに変更してください。)

|

||||

|

||||

通常の(管理者ではない)PowerShellを開き以下を順に実行します。

|

||||

|

||||

```powershell

|

||||

git clone https://github.com/kohya-ss/sd-scripts.git

|

||||

cd sd-scripts

|

||||

|

||||

python -m venv venv

|

||||

.\venv\Scripts\activate

|

||||

|

||||

pip install torch==1.12.1+cu116 torchvision==0.13.1+cu116 --extra-index-url https://download.pytorch.org/whl/cu116

|

||||

pip install --upgrade -r requirements.txt

|

||||

pip install -U -I --no-deps https://github.com/C43H66N12O12S2/stable-diffusion-webui/releases/download/f/xformers-0.0.14.dev0-cp310-cp310-win_amd64.whl

|

||||

|

||||

cp .\bitsandbytes_windows\*.dll .\venv\Lib\site-packages\bitsandbytes\

|

||||

cp .\bitsandbytes_windows\cextension.py .\venv\Lib\site-packages\bitsandbytes\cextension.py

|

||||

cp .\bitsandbytes_windows\main.py .\venv\Lib\site-packages\bitsandbytes\cuda_setup\main.py

|

||||

|

||||

accelerate config

|

||||

```

|

||||

|

||||

<!--

|

||||

pip install torch==1.13.1+cu117 torchvision==0.14.1+cu117 --extra-index-url https://download.pytorch.org/whl/cu117

|

||||

pip install --use-pep517 --upgrade -r requirements.txt

|

||||

pip install -U -I --no-deps xformers==0.0.16

|

||||

-->

|

||||

|

||||

コマンドプロンプトでは以下になります。

|

||||

|

||||

|

||||

```bat

|

||||

git clone https://github.com/kohya-ss/sd-scripts.git

|

||||

cd sd-scripts

|

||||

|

||||

python -m venv venv

|

||||

.\venv\Scripts\activate

|

||||

|

||||

pip install torch==1.12.1+cu116 torchvision==0.13.1+cu116 --extra-index-url https://download.pytorch.org/whl/cu116

|

||||

pip install --upgrade -r requirements.txt

|

||||

pip install -U -I --no-deps https://github.com/C43H66N12O12S2/stable-diffusion-webui/releases/download/f/xformers-0.0.14.dev0-cp310-cp310-win_amd64.whl

|

||||

|

||||

copy /y .\bitsandbytes_windows\*.dll .\venv\Lib\site-packages\bitsandbytes\

|

||||

copy /y .\bitsandbytes_windows\cextension.py .\venv\Lib\site-packages\bitsandbytes\cextension.py

|

||||

copy /y .\bitsandbytes_windows\main.py .\venv\Lib\site-packages\bitsandbytes\cuda_setup\main.py

|

||||

|

||||

accelerate config

|

||||

```

|

||||

|

||||

(注:``python -m venv venv`` のほうが ``python -m venv --system-site-packages venv`` より安全そうなため書き換えました。globalなpythonにパッケージがインストールしてあると、後者だといろいろと問題が起きます。)

|

||||

|

||||

accelerate configの質問には以下のように答えてください。(bf16で学習する場合、最後の質問にはbf16と答えてください。)

|

||||

|

||||

※0.15.0から日本語環境では選択のためにカーソルキーを押すと落ちます(……)。数字キーの0、1、2……で選択できますので、そちらを使ってください。

|

||||

|

||||

```txt

|

||||

- This machine

|

||||

- No distributed training

|

||||

- NO

|

||||

- NO

|

||||

- NO

|

||||

- all

|

||||

- fp16

|

||||

```

|

||||

|

||||

※場合によって ``ValueError: fp16 mixed precision requires a GPU`` というエラーが出ることがあるようです。この場合、6番目の質問(

|

||||

``What GPU(s) (by id) should be used for training on this machine as a comma-separated list? [all]:``)に「0」と答えてください。(id `0`のGPUが使われます。)

|

||||

|

||||

### PyTorchとxformersのバージョンについて

|

||||

|

||||

他のバージョンでは学習がうまくいかない場合があるようです。特に他の理由がなければ指定のバージョンをお使いください。

|

||||

|

||||

## アップグレード

|

||||

|

||||

新しいリリースがあった場合、以下のコマンドで更新できます。

|

||||

|

||||

```powershell

|

||||

cd sd-scripts

|

||||

git pull

|

||||

.\venv\Scripts\activate

|

||||

pip install --use-pep517 --upgrade -r requirements.txt

|

||||

```

|

||||

|

||||

コマンドが成功すれば新しいバージョンが使用できます。

|

||||

|

||||

## 謝意

|

||||

|

||||

LoRAの実装は[cloneofsimo氏のリポジトリ](https://github.com/cloneofsimo/lora)を基にしたものです。感謝申し上げます。

|

||||

|

||||

## ライセンス

|

||||

|

||||

スクリプトのライセンスはASL 2.0ですが(Diffusersおよびcloneofsimo氏のリポジトリ由来のものも同様)、一部他のライセンスのコードを含みます。

|

||||

|

||||

[Memory Efficient Attention Pytorch](https://github.com/lucidrains/memory-efficient-attention-pytorch): MIT

|

||||

|

||||

[bitsandbytes](https://github.com/TimDettmers/bitsandbytes): MIT

|

||||

|

||||

[BLIP](https://github.com/salesforce/BLIP): BSD-3-Clause

|

||||

|

||||

|

||||

* [環境整備とDreamBooth学習スクリプトについて](https://note.com/kohya_ss/n/nee3ed1649fb6)

|

||||

* [fine-tuningスクリプト](https://note.com/kohya_ss/n/nbf7ce8d80f29):

|

||||

Including BLIP captioning and tagging by DeepDanbooru or WD14 tagger

|

||||

* [画像生成スクリプト](https://note.com/kohya_ss/n/n2693183a798e)

|

||||

* [モデル変換スクリプト](https://note.com/kohya_ss/n/n374f316fe4ad)

|

||||

|

||||

227

README.md

227

README.md

@@ -1,5 +1,8 @@

|

||||

This repository contains training, generation and utility scripts for Stable Diffusion.

|

||||

|

||||

[__Change History__](#change-history) is moved to the bottom of the page.

|

||||

更新履歴は[ページ末尾](#change-history)に移しました。

|

||||

|

||||

[日本語版README](./README-ja.md)

|

||||

|

||||

For easier use (GUI and PowerShell scripts etc...), please visit [the repository maintained by bmaltais](https://github.com/bmaltais/kohya_ss). Thanks to @bmaltais!

|

||||

@@ -7,22 +10,226 @@ For easier use (GUI and PowerShell scripts etc...), please visit [the repository

|

||||

This repository contains the scripts for:

|

||||

|

||||

* DreamBooth training, including U-Net and Text Encoder

|

||||

* fine-tuning (native training), including U-Net and Text Encoder

|

||||

* image generation

|

||||

* model conversion (supports 1.x and 2.x, Stable Diffision ckpt/safetensors and Diffusers)

|

||||

* Fine-tuning (native training), including U-Net and Text Encoder

|

||||

* LoRA training

|

||||

* Texutl Inversion training

|

||||

* Image generation

|

||||

* Model conversion (supports 1.x and 2.x, Stable Diffision ckpt/safetensors and Diffusers)

|

||||

|

||||

## About requirements_*.txt

|

||||

__Stable Diffusion web UI now seems to support LoRA trained by ``sd-scripts``.__ (SD 1.x based only) Thank you for great work!!!

|

||||

|

||||

These files do not contain requirements for PyTorch and Diffusers. Because the versions of them depend on your environment. Please install PyTorch at first, then Diffusers.

|

||||

## About requirements.txt

|

||||

|

||||

The scripts is tested with PyTorch 1.12.1 and 1.13.0, Diffusers 0.10.2.

|

||||

These files do not contain requirements for PyTorch. Because the versions of them depend on your environment. Please install PyTorch at first (see installation guide below.)

|

||||

|

||||

The scripts are tested with PyTorch 1.12.1 and 1.13.0, Diffusers 0.10.2.

|

||||

|

||||

## Links to how-to-use documents

|

||||

|

||||

All documents are in Japanese currently, and CUI based.

|

||||

|

||||

* [Environment setup and DreamBooth training guide](https://note.com/kohya_ss/n/nee3ed1649fb6)

|

||||

* [Fine-tuning step-by-step guide](https://note.com/kohya_ss/n/nbf7ce8d80f29):

|

||||

* [DreamBooth training guide](./train_db_README-ja.md)

|

||||

* [Step by Step fine-tuning guide](./fine_tune_README_ja.md):

|

||||

Including BLIP captioning and tagging by DeepDanbooru or WD14 tagger

|

||||

* [Image generation](https://note.com/kohya_ss/n/n2693183a798e)

|

||||

* [Model conversion](https://note.com/kohya_ss/n/n374f316fe4ad)

|

||||

* [training LoRA](./train_network_README-ja.md)

|

||||

* [training Textual Inversion](./train_ti_README-ja.md)

|

||||

* note.com [Image generation](https://note.com/kohya_ss/n/n2693183a798e)

|

||||

* note.com [Model conversion](https://note.com/kohya_ss/n/n374f316fe4ad)

|

||||

|

||||

## Windows Required Dependencies

|

||||

|

||||

Python 3.10.6 and Git:

|

||||

|

||||

- Python 3.10.6: https://www.python.org/ftp/python/3.10.6/python-3.10.6-amd64.exe

|

||||

- git: https://git-scm.com/download/win

|

||||

|

||||

Give unrestricted script access to powershell so venv can work:

|

||||

|

||||

- Open an administrator powershell window

|

||||

- Type `Set-ExecutionPolicy Unrestricted` and answer A

|

||||

- Close admin powershell window

|

||||

|

||||

## Windows Installation

|

||||

|

||||

Open a regular Powershell terminal and type the following inside:

|

||||

|

||||

```powershell

|

||||

git clone https://github.com/kohya-ss/sd-scripts.git

|

||||

cd sd-scripts

|

||||

|

||||

python -m venv venv

|

||||

.\venv\Scripts\activate

|

||||

|

||||

pip install torch==1.12.1+cu116 torchvision==0.13.1+cu116 --extra-index-url https://download.pytorch.org/whl/cu116

|

||||

pip install --upgrade -r requirements.txt

|

||||

pip install -U -I --no-deps https://github.com/C43H66N12O12S2/stable-diffusion-webui/releases/download/f/xformers-0.0.14.dev0-cp310-cp310-win_amd64.whl

|

||||

|

||||

cp .\bitsandbytes_windows\*.dll .\venv\Lib\site-packages\bitsandbytes\

|

||||

cp .\bitsandbytes_windows\cextension.py .\venv\Lib\site-packages\bitsandbytes\cextension.py

|

||||

cp .\bitsandbytes_windows\main.py .\venv\Lib\site-packages\bitsandbytes\cuda_setup\main.py

|

||||

|

||||

accelerate config

|

||||

```

|

||||

|

||||

update: ``python -m venv venv`` is seemed to be safer than ``python -m venv --system-site-packages venv`` (some user have packages in global python).

|

||||

|

||||

Answers to accelerate config:

|

||||

|

||||

```txt

|

||||

- This machine

|

||||

- No distributed training

|

||||

- NO

|

||||

- NO

|

||||

- NO

|

||||

- all

|

||||

- fp16

|

||||

```

|

||||

|

||||

note: Some user reports ``ValueError: fp16 mixed precision requires a GPU`` is occurred in training. In this case, answer `0` for the 6th question:

|

||||

``What GPU(s) (by id) should be used for training on this machine as a comma-separated list? [all]:``

|

||||

|

||||

(Single GPU with id `0` will be used.)

|

||||

|

||||

### about PyTorch and xformers

|

||||

|

||||

Other versions of PyTorch and xformers seem to have problems with training.

|

||||

If there is no other reason, please install the specified version.

|

||||

|

||||

## Upgrade

|

||||

|

||||

When a new release comes out you can upgrade your repo with the following command:

|

||||

|

||||

```powershell

|

||||

cd sd-scripts

|

||||

git pull

|

||||

.\venv\Scripts\activate

|

||||

pip install --use-pep517 --upgrade -r requirements.txt

|

||||

```

|

||||

|

||||

Once the commands have completed successfully you should be ready to use the new version.

|

||||

|

||||

## Credits

|

||||

|

||||

The implementation for LoRA is based on [cloneofsimo's repo](https://github.com/cloneofsimo/lora). Thank you for great work!!!

|

||||

|

||||

## License

|

||||

|

||||

The majority of scripts is licensed under ASL 2.0 (including codes from Diffusers, cloneofsimo's), however portions of the project are available under separate license terms:

|

||||

|

||||

[Memory Efficient Attention Pytorch](https://github.com/lucidrains/memory-efficient-attention-pytorch): MIT

|

||||

|

||||

[bitsandbytes](https://github.com/TimDettmers/bitsandbytes): MIT

|

||||

|

||||

[BLIP](https://github.com/salesforce/BLIP): BSD-3-Clause

|

||||

|

||||

## Change History

|

||||

|

||||

- 19 Feb. 2023, 2023/2/19:

|

||||

- Add ``--use_lion_optimizer`` to each training script to use [Lion optimizer](https://github.com/lucidrains/lion-pytorch).

|

||||

- Please install Lion optimizer with ``pip install lion-pytorch`` (it is not in ``requirements.txt`` currently.)

|

||||

- Add ``--lowram`` option to ``train_network.py``. Load models to VRAM instead of VRAM (for machines which have bigger VRAM than RAM such as Colab and Kaggle). Thanks to Isotr0py!

|

||||

- Default behavior (without lowram) has reverted to the same as before 14 Feb.

|

||||

- Fixed git commit hash to be set correctly regardless of the working directory. Thanks to vladmandic!

|

||||

|

||||

- ``--use_lion_optimizer`` オプションを各学習スクリプトに追加しました。 [Lion optimizer](https://github.com/lucidrains/lion-pytorch) を使用できます。

|

||||

- あらかじめ ``pip install lion-pytorch`` でインストールしてください(現在は ``requirements.txt`` に含まれていません)。

|

||||

- ``--lowram`` オプションを ``train_network.py`` に追加しました。モデルをRAMではなくVRAMに読み込みます(ColabやKaggleなど、VRAMがRAMに比べて多い環境で有効です)。 Isotr0py 氏に感謝します。

|

||||

- lowram オプションなしのデフォルト動作は2/14より前と同じに戻しました。

|

||||

- git commit hash を現在のフォルダ位置に関わらず正しく取得するように修正しました。vladmandic 氏に感謝します。

|

||||

|

||||

- 16 Feb. 2023, 2023/2/16:

|

||||

- Noise offset is recorded to the metadata. Thanks to space-nuko!

|

||||

- Show the moving average loss to prevent loss jumping in ``train_network.py`` and ``train_db.py``. Thanks to shirayu!

|

||||

- Noise offsetがメタデータに記録されるようになりました。space-nuko氏に感謝します。

|

||||

- ``train_network.py``と``train_db.py``で学習中に表示されるlossの値が移動平均になりました。epochの先頭で表示されるlossが大きく変動する事象を解決します。shirayu氏に感謝します。

|

||||

- 14 Feb. 2023, 2023/2/14:

|

||||

- Add support with multi-gpu trainining for ``train_network.py``. Thanks to Isotr0py!

|

||||

- Add ``--verbose`` option for ``resize_lora.py``. For details, see [this PR](https://github.com/kohya-ss/sd-scripts/pull/179). Thanks to mgz-dev!

|

||||

- Git commit hash is added to the metadata for LoRA. Thanks to space-nuko!

|

||||

- Add ``--noise_offset`` option for each training scripts.

|

||||

- Implementation of https://www.crosslabs.org//blog/diffusion-with-offset-noise

|

||||

- This option may improve ability to generate darker/lighter images. May work with LoRA.

|

||||

- ``train_network.py``でマルチGPU学習をサポートしました。Isotr0py氏に感謝します。

|

||||

- ``--verbose``オプションを ``resize_lora.py`` に追加しました。表示される情報の詳細は [こちらのPR](https://github.com/kohya-ss/sd-scripts/pull/179) をご参照ください。mgz-dev氏に感謝します。

|

||||

- LoRAのメタデータにgitのcommit hashを追加しました。space-nuko氏に感謝します。

|

||||

- ``--noise_offset`` オプションを各学習スクリプトに追加しました。

|

||||

- こちらの記事の実装になります: https://www.crosslabs.org//blog/diffusion-with-offset-noise

|

||||

- 全体的に暗い、明るい画像の生成結果が良くなる可能性があるようです。LoRA学習でも有効なようです。

|

||||

|

||||

- 11 Feb. 2023, 2023/2/11:

|

||||

- ``lora_interrogator.py`` is added in ``networks`` folder. See ``python networks\lora_interrogator.py -h`` for usage.

|

||||

- For LoRAs where the activation word is unknown, this script compares the output of Text Encoder after applying LoRA to that of unapplied to find out which token is affected by LoRA. Hopefully you can figure out the activation word. LoRA trained with captions does not seem to be able to interrogate.

|

||||

- Batch size can be large (like 64 or 128).

|

||||

- ``train_textual_inversion.py`` now supports multiple init words.

|

||||

- Following feature is reverted to be the same as before. Sorry for confusion:

|

||||

> Now the number of data in each batch is limited to the number of actual images (not duplicated). Because a certain bucket may contain smaller number of actual images, so the batch may contain same (duplicated) images.

|

||||

|

||||

- ``lora_interrogator.py`` を ``network``フォルダに追加しました。使用法は ``python networks\lora_interrogator.py -h`` でご確認ください。

|

||||

- このスクリプトは、起動promptがわからないLoRAについて、LoRA適用前後のText Encoderの出力を比較することで、どのtokenの出力が変化しているかを調べます。運が良ければ起動用の単語が分かります。キャプション付きで学習されたLoRAは影響が広範囲に及ぶため、調査は難しいようです。

|

||||

- バッチサイズはわりと大きくできます(64や128など)。

|

||||

- ``train_textual_inversion.py`` で複数のinit_word指定が可能になりました。

|

||||

- 次の機能を削除し元に戻しました。混乱を招き申し訳ありません。

|

||||

> これらのオプションによりbucketが細分化され、ひとつのバッチ内に同一画像が重複して存在することが増えたため、バッチサイズを``そのbucketの画像種類数``までに制限する機能を追加しました。

|

||||

|

||||

- 10 Feb. 2023, 2023/2/10:

|

||||

- Updated ``requirements.txt`` to prevent upgrading with pip taking a long time or failure to upgrade.

|

||||

- ``resize_lora.py`` keeps the metadata of the model. ``dimension is resized from ...`` is added to the top of ``ss_training_comment``.

|

||||

- ``merge_lora.py`` supports models with different ``alpha``s. If there is a problem, old version is ``merge_lora_old.py``.

|

||||

- ``svd_merge_lora.py`` is added. This script merges LoRA models with any rank (dim) and alpha, and approximate a new LoRA with svd for a specified rank (dim).

|

||||

- Note: merging scripts erase the metadata currently.

|

||||

- ``resize_images_to_resolution.py`` supports multibyte characters in filenames.

|

||||

- pipでの更新が長時間掛かったり、更新に失敗したりするのを防ぐため、``requirements.txt``を更新しました。

|

||||

- ``resize_lora.py``がメタデータを保持するようになりました。 ``dimension is resized from ...`` という文字列が ``ss_training_comment`` の先頭に追加されます。

|

||||

- ``merge_lora.py``がalphaが異なるモデルをサポートしました。 何か問題がありましたら旧バージョン ``merge_lora_old.py`` をお使いください。

|

||||

- ``svd_merge_lora.py`` を追加しました。 複数の任意のdim (rank)、alphaのLoRAモデルをマージし、svdで任意dim(rank)のLoRAで近似します。

|

||||

- 注:マージ系のスクリプトは現時点ではメタデータを消去しますのでご注意ください。

|

||||

- ``resize_images_to_resolution.py``が日本語ファイル名をサポートしました。

|

||||

|

||||

- 9 Feb. 2023, 2023/2/9:

|

||||

- Caption dropout is supported in ``train_db.py``, ``fine_tune.py`` and ``train_network.py``. Thanks to forestsource!

|

||||

- ``--caption_dropout_rate`` option specifies the dropout rate for captions (0~1.0, 0.1 means 10% chance for dropout). If dropout occurs, the image is trained with the empty caption. Default is 0 (no dropout).

|

||||

- ``--caption_dropout_every_n_epochs`` option specifies how many epochs to drop captions. If ``3`` is specified, in epoch 3, 6, 9 ..., images are trained with all captions empty. Default is None (no dropout).

|

||||

- ``--caption_tag_dropout_rate`` option specified the dropout rate for tags (comma separated tokens) (0~1.0, 0.1 means 10% chance for dropout). If dropout occurs, the tag is removed from the caption. If ``--keep_tokens`` option is set, these tokens (tags) are not dropped. Default is 0 (no droupout).

|

||||

- The bulk image downsampling script is added. Documentation is [here](https://github.com/kohya-ss/sd-scripts/blob/main/train_network_README-ja.md#%E7%94%BB%E5%83%8F%E3%83%AA%E3%82%B5%E3%82%A4%E3%82%BA%E3%82%B9%E3%82%AF%E3%83%AA%E3%83%97%E3%83%88) (in Jpanaese). Thanks to bmaltais!

|

||||

- Typo check is added. Thanks to shirayu!

|

||||

- キャプションのドロップアウトを``train_db.py``、``fine_tune.py``、``train_network.py``の各スクリプトに追加しました。forestsource氏に感謝します。

|

||||

- ``--caption_dropout_rate``オプションでキャプションのドロップアウト率を指定します(0~1.0、 0.1を指定すると10%の確率でドロップアウト)。ドロップアウトされた場合、画像は空のキャプションで学習されます。デフォルトは 0 (ドロップアウトなし)です。

|

||||

- ``--caption_dropout_every_n_epochs`` オプションで何エポックごとにキャプションを完全にドロップアウトするか指定します。たとえば``3``を指定すると、エポック3、6、9……で、すべての画像がキャプションなしで学習されます。デフォルトは None (ドロップアウトなし)です。

|

||||

- ``--caption_tag_dropout_rate`` オプションで各タグ(カンマ区切りの各部分)のドロップアウト率を指定します(0~1.0、 0.1を指定すると10%の確率でドロップアウト)。ドロップアウトが起きるとそのタグはそのときだけキャプションから取り除かれて学習されます。``--keep_tokens`` オプションを指定していると、シャッフルされない部分のタグはドロップアウトされません。デフォルトは 0 (ドロップアウトなし)です。

|

||||

- 画像の一括縮小スクリプトを追加しました。ドキュメントは [こちら](https://github.com/kohya-ss/sd-scripts/blob/main/train_network_README-ja.md#%E7%94%BB%E5%83%8F%E3%83%AA%E3%82%B5%E3%82%A4%E3%82%BA%E3%82%B9%E3%82%AF%E3%83%AA%E3%83%97%E3%83%88) です。bmaltais氏に感謝します。

|

||||

- 誤字チェッカが追加されました。shirayu氏に感謝します。

|

||||

|

||||

- 6 Feb. 2023, 2023/2/6:

|

||||

- ``--bucket_reso_steps`` and ``--bucket_no_upscale`` options are added to training scripts (fine tuning, DreamBooth, LoRA and Textual Inversion) and ``prepare_buckets_latents.py``.

|

||||

- ``--bucket_reso_steps`` takes the steps for buckets in aspect ratio bucketing. Default is 64, same as before.

|

||||

- Any value greater than or equal to 1 can be specified; 64 is highly recommended and a value divisible by 8 is recommended.

|

||||

- If less than 64 is specified, padding will occur within U-Net. The result is unknown.

|

||||

- If you specify a value that is not divisible by 8, it will be truncated to divisible by 8 inside VAE, because the size of the latent is 1/8 of the image size.

|

||||

- If ``--bucket_no_upscale`` option is specified, images smaller than the bucket size will be processed without upscaling.

|

||||

- Internally, a bucket smaller than the image size is created (for example, if the image is 300x300 and ``bucket_reso_steps=64``, the bucket is 256x256). The image will be trimmed.

|

||||

- Implementation of [#130](https://github.com/kohya-ss/sd-scripts/issues/130).

|

||||

- Images with an area larger than the maximum size specified by ``--resolution`` are downsampled to the max bucket size.

|

||||

- Now the number of data in each batch is limited to the number of actual images (not duplicated). Because a certain bucket may contain smaller number of actual images, so the batch may contain same (duplicated) images.

|

||||

- ``--random_crop`` now also works with buckets enabled.

|

||||

- Instead of always cropping the center of the image, the image is shifted left, right, up, and down to be used as the training data. This is expected to train to the edges of the image.

|

||||

- Implementation of discussion [#34](https://github.com/kohya-ss/sd-scripts/discussions/34).

|

||||

|

||||

- ``--bucket_reso_steps``および``--bucket_no_upscale``オプションを、学習スクリプトおよび``prepare_buckets_latents.py``に追加しました。

|

||||

- ``--bucket_reso_steps``オプションでは、bucketの解像度の単位を指定できます。デフォルトは64で、今までと同じ動作です。

|

||||

- 1以上の任意の値を指定できます。基本的には64を推奨します。64以外の値では、8で割り切れる値を推奨します。

|

||||

- 64未満を指定するとU-Netの内部でpaddingが発生します。どのような結果になるかは未知数です。

|

||||

- 8で割り切れない値を指定すると余りはVAE内部で切り捨てられます。

|

||||

- ``--bucket_no_upscale``オプションを指定すると、bucketサイズよりも小さい画像は拡大せずそのまま処理します。

|

||||

- 内部的には画像サイズ以下のサイズのbucketを作成します(たとえば画像が300x300で``bucket_reso_steps=64``の場合、256x256のbucket)。余りは都度trimmingされます。

|

||||

- [#130](https://github.com/kohya-ss/sd-scripts/issues/130) を実装したものです。

|

||||

- ``--resolution``で指定した最大サイズよりも面積が大きい画像は、最大サイズと同じ面積になるようアスペクト比を維持したまま縮小され、そのサイズを元にbucketが作られます。

|

||||

- これらのオプションによりbucketが細分化され、ひとつのバッチ内に同一画像が重複して存在することが増えたため、バッチサイズを``そのbucketの画像種類数``までに制限する機能を追加しました。

|

||||

- たとえば繰り返し回数10で、あるbucketに1枚しか画像がなく、バッチサイズが10以上のとき、今まではepoch内で、同一画像を10枚含むバッチが1回だけ使用されていました。

|

||||

- 機能追加後はepoch内にサイズ1のバッチが10回、使用されます。

|

||||

- ``--random_crop``がbucketを有効にした場合にも機能するようになりました。

|

||||

- 常に画像の中央を切り取るのではなく、左右、上下にずらして教師データにします。これにより画像端まで学習されることが期待されます。

|

||||

- discussionの[#34](https://github.com/kohya-ss/sd-scripts/discussions/34)を実装したものです。

|

||||

|

||||

|

||||

Please read [Releases](https://github.com/kohya-ss/sd-scripts/releases) for recent updates.

|

||||

最近の更新情報は [Release](https://github.com/kohya-ss/sd-scripts/releases) をご覧ください。

|

||||

|

||||

15

_typos.toml

Normal file

15

_typos.toml

Normal file

@@ -0,0 +1,15 @@

|

||||

# Files for typos

|

||||

# Instruction: https://github.com/marketplace/actions/typos-action#getting-started

|

||||

|

||||

[default.extend-identifiers]

|

||||

|

||||

[default.extend-words]

|

||||

NIN="NIN"

|

||||

parms="parms"

|

||||

nin="nin"

|

||||

extention="extention" # Intentionally left

|

||||

nd="nd"

|

||||

|

||||

|

||||

[files]

|

||||

extend-exclude = ["_typos.toml"]

|

||||

54

bitsandbytes_windows/cextension.py

Normal file

54

bitsandbytes_windows/cextension.py

Normal file

@@ -0,0 +1,54 @@

|

||||

import ctypes as ct

|

||||

from pathlib import Path

|

||||

from warnings import warn

|

||||

|

||||

from .cuda_setup.main import evaluate_cuda_setup

|

||||

|

||||

|

||||

class CUDALibrary_Singleton(object):

|

||||

_instance = None

|

||||

|

||||

def __init__(self):

|

||||

raise RuntimeError("Call get_instance() instead")

|

||||

|

||||

def initialize(self):

|

||||

binary_name = evaluate_cuda_setup()

|

||||

package_dir = Path(__file__).parent

|

||||

binary_path = package_dir / binary_name

|

||||

|

||||

if not binary_path.exists():

|

||||

print(f"CUDA SETUP: TODO: compile library for specific version: {binary_name}")

|

||||

legacy_binary_name = "libbitsandbytes.so"

|

||||

print(f"CUDA SETUP: Defaulting to {legacy_binary_name}...")

|

||||

binary_path = package_dir / legacy_binary_name

|

||||

if not binary_path.exists():

|

||||

print('CUDA SETUP: CUDA detection failed. Either CUDA driver not installed, CUDA not installed, or you have multiple conflicting CUDA libraries!')

|

||||

print('CUDA SETUP: If you compiled from source, try again with `make CUDA_VERSION=DETECTED_CUDA_VERSION` for example, `make CUDA_VERSION=113`.')

|

||||

raise Exception('CUDA SETUP: Setup Failed!')

|

||||

# self.lib = ct.cdll.LoadLibrary(binary_path)

|

||||

self.lib = ct.cdll.LoadLibrary(str(binary_path)) # $$$

|

||||

else:

|

||||

print(f"CUDA SETUP: Loading binary {binary_path}...")

|

||||

# self.lib = ct.cdll.LoadLibrary(binary_path)

|

||||

self.lib = ct.cdll.LoadLibrary(str(binary_path)) # $$$

|

||||

|

||||

@classmethod

|

||||

def get_instance(cls):

|

||||

if cls._instance is None:

|

||||

cls._instance = cls.__new__(cls)

|

||||

cls._instance.initialize()

|

||||

return cls._instance

|

||||

|

||||

|

||||

lib = CUDALibrary_Singleton.get_instance().lib

|

||||

try:

|

||||

lib.cadam32bit_g32

|

||||

lib.get_context.restype = ct.c_void_p

|

||||

lib.get_cusparse.restype = ct.c_void_p

|

||||

COMPILED_WITH_CUDA = True

|

||||

except AttributeError:

|

||||

warn(

|

||||

"The installed version of bitsandbytes was compiled without GPU support. "

|

||||

"8-bit optimizers and GPU quantization are unavailable."

|

||||

)

|

||||

COMPILED_WITH_CUDA = False

|

||||

BIN

bitsandbytes_windows/libbitsandbytes_cpu.dll

Normal file

BIN

bitsandbytes_windows/libbitsandbytes_cpu.dll

Normal file

Binary file not shown.

BIN

bitsandbytes_windows/libbitsandbytes_cuda116.dll

Normal file

BIN

bitsandbytes_windows/libbitsandbytes_cuda116.dll

Normal file

Binary file not shown.

166

bitsandbytes_windows/main.py

Normal file

166

bitsandbytes_windows/main.py

Normal file

@@ -0,0 +1,166 @@

|

||||

"""

|

||||

extract factors the build is dependent on:

|

||||

[X] compute capability

|

||||

[ ] TODO: Q - What if we have multiple GPUs of different makes?

|

||||

- CUDA version

|

||||

- Software:

|

||||

- CPU-only: only CPU quantization functions (no optimizer, no matrix multiple)

|

||||

- CuBLAS-LT: full-build 8-bit optimizer

|

||||

- no CuBLAS-LT: no 8-bit matrix multiplication (`nomatmul`)

|

||||

|

||||

evaluation:

|

||||

- if paths faulty, return meaningful error

|

||||

- else:

|

||||

- determine CUDA version

|

||||

- determine capabilities

|

||||

- based on that set the default path

|

||||

"""

|

||||

|

||||

import ctypes

|

||||

|

||||

from .paths import determine_cuda_runtime_lib_path

|

||||

|

||||

|

||||

def check_cuda_result(cuda, result_val):

|

||||

# 3. Check for CUDA errors

|

||||

if result_val != 0:

|

||||

error_str = ctypes.c_char_p()

|

||||

cuda.cuGetErrorString(result_val, ctypes.byref(error_str))

|

||||

print(f"CUDA exception! Error code: {error_str.value.decode()}")

|

||||

|

||||

def get_cuda_version(cuda, cudart_path):

|

||||

# https://docs.nvidia.com/cuda/cuda-runtime-api/group__CUDART____VERSION.html#group__CUDART____VERSION

|

||||

try:

|

||||

cudart = ctypes.CDLL(cudart_path)

|

||||

except OSError:

|

||||

# TODO: shouldn't we error or at least warn here?

|

||||

print(f'ERROR: libcudart.so could not be read from path: {cudart_path}!')

|

||||

return None

|

||||

|

||||

version = ctypes.c_int()

|

||||

check_cuda_result(cuda, cudart.cudaRuntimeGetVersion(ctypes.byref(version)))

|

||||

version = int(version.value)

|

||||

major = version//1000

|

||||

minor = (version-(major*1000))//10

|

||||

|

||||

if major < 11:

|

||||

print('CUDA SETUP: CUDA version lower than 11 are currently not supported for LLM.int8(). You will be only to use 8-bit optimizers and quantization routines!!')

|

||||

|

||||

return f'{major}{minor}'

|

||||

|

||||

|

||||

def get_cuda_lib_handle():

|

||||

# 1. find libcuda.so library (GPU driver) (/usr/lib)

|

||||

try:

|

||||

cuda = ctypes.CDLL("libcuda.so")

|

||||

except OSError:

|

||||

# TODO: shouldn't we error or at least warn here?

|

||||

print('CUDA SETUP: WARNING! libcuda.so not found! Do you have a CUDA driver installed? If you are on a cluster, make sure you are on a CUDA machine!')

|

||||

return None

|

||||

check_cuda_result(cuda, cuda.cuInit(0))

|

||||

|

||||

return cuda

|

||||

|

||||

|

||||

def get_compute_capabilities(cuda):

|

||||

"""

|

||||

1. find libcuda.so library (GPU driver) (/usr/lib)

|

||||

init_device -> init variables -> call function by reference

|

||||

2. call extern C function to determine CC

|

||||

(https://docs.nvidia.com/cuda/cuda-driver-api/group__CUDA__DEVICE__DEPRECATED.html)

|

||||

3. Check for CUDA errors

|

||||

https://stackoverflow.com/questions/14038589/what-is-the-canonical-way-to-check-for-errors-using-the-cuda-runtime-api

|

||||

# bits taken from https://gist.github.com/f0k/63a664160d016a491b2cbea15913d549

|

||||

"""

|

||||

|

||||

|

||||

nGpus = ctypes.c_int()

|

||||

cc_major = ctypes.c_int()

|

||||

cc_minor = ctypes.c_int()

|

||||

|

||||

device = ctypes.c_int()

|

||||

|

||||

check_cuda_result(cuda, cuda.cuDeviceGetCount(ctypes.byref(nGpus)))

|

||||

ccs = []

|

||||

for i in range(nGpus.value):

|

||||

check_cuda_result(cuda, cuda.cuDeviceGet(ctypes.byref(device), i))

|

||||

ref_major = ctypes.byref(cc_major)

|

||||

ref_minor = ctypes.byref(cc_minor)

|

||||

# 2. call extern C function to determine CC

|

||||

check_cuda_result(

|

||||

cuda, cuda.cuDeviceComputeCapability(ref_major, ref_minor, device)

|

||||

)

|

||||

ccs.append(f"{cc_major.value}.{cc_minor.value}")

|

||||

|

||||

return ccs

|

||||

|

||||

|

||||

# def get_compute_capability()-> Union[List[str, ...], None]: # FIXME: error

|

||||

def get_compute_capability(cuda):

|

||||

"""

|

||||

Extracts the highest compute capbility from all available GPUs, as compute

|

||||

capabilities are downwards compatible. If no GPUs are detected, it returns

|

||||

None.

|

||||

"""

|

||||

ccs = get_compute_capabilities(cuda)

|

||||

if ccs is not None:

|

||||

# TODO: handle different compute capabilities; for now, take the max

|

||||

return ccs[-1]

|

||||

return None

|

||||

|

||||

|

||||

def evaluate_cuda_setup():

|

||||

print('')

|

||||

print('='*35 + 'BUG REPORT' + '='*35)

|

||||

print('Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues')

|

||||

print('For effortless bug reporting copy-paste your error into this form: https://docs.google.com/forms/d/e/1FAIpQLScPB8emS3Thkp66nvqwmjTEgxp8Y9ufuWTzFyr9kJ5AoI47dQ/viewform?usp=sf_link')

|

||||

print('='*80)

|

||||

return "libbitsandbytes_cuda116.dll" # $$$

|

||||

|

||||

binary_name = "libbitsandbytes_cpu.so"

|

||||

#if not torch.cuda.is_available():

|

||||

#print('No GPU detected. Loading CPU library...')

|

||||

#return binary_name

|

||||

|

||||

cudart_path = determine_cuda_runtime_lib_path()

|

||||

if cudart_path is None:

|

||||

print(

|

||||

"WARNING: No libcudart.so found! Install CUDA or the cudatoolkit package (anaconda)!"

|

||||

)

|

||||

return binary_name

|

||||

|

||||

print(f"CUDA SETUP: CUDA runtime path found: {cudart_path}")

|

||||

cuda = get_cuda_lib_handle()

|

||||

cc = get_compute_capability(cuda)

|

||||

print(f"CUDA SETUP: Highest compute capability among GPUs detected: {cc}")

|

||||

cuda_version_string = get_cuda_version(cuda, cudart_path)

|

||||

|

||||

|

||||

if cc == '':

|

||||

print(

|

||||

"WARNING: No GPU detected! Check your CUDA paths. Processing to load CPU-only library..."

|

||||

)

|

||||

return binary_name

|

||||

|

||||

# 7.5 is the minimum CC vor cublaslt

|

||||

has_cublaslt = cc in ["7.5", "8.0", "8.6"]

|

||||

|

||||

# TODO:

|

||||

# (1) CUDA missing cases (no CUDA installed by CUDA driver (nvidia-smi accessible)

|

||||

# (2) Multiple CUDA versions installed

|

||||

|

||||

# we use ls -l instead of nvcc to determine the cuda version

|

||||

# since most installations will have the libcudart.so installed, but not the compiler

|

||||

print(f'CUDA SETUP: Detected CUDA version {cuda_version_string}')

|

||||

|

||||

def get_binary_name():

|

||||

"if not has_cublaslt (CC < 7.5), then we have to choose _nocublaslt.so"

|

||||

bin_base_name = "libbitsandbytes_cuda"

|

||||

if has_cublaslt:

|

||||

return f"{bin_base_name}{cuda_version_string}.so"

|

||||

else:

|

||||

return f"{bin_base_name}{cuda_version_string}_nocublaslt.so"

|

||||

|

||||

binary_name = get_binary_name()

|

||||

|

||||

return binary_name

|

||||

961

fine_tune.py

961

fine_tune.py

File diff suppressed because it is too large

Load Diff

465

fine_tune_README_ja.md

Normal file

465

fine_tune_README_ja.md

Normal file

@@ -0,0 +1,465 @@

|

||||

NovelAIの提案した学習手法、自動キャプションニング、タグ付け、Windows+VRAM 12GB(v1.4/1.5の場合)環境等に対応したfine tuningです。

|

||||

|

||||

## 概要

|

||||

Diffusersを用いてStable DiffusionのU-Netのfine tuningを行います。NovelAIの記事にある以下の改善に対応しています(Aspect Ratio BucketingについてはNovelAIのコードを参考にしましたが、最終的なコードはすべてオリジナルです)。

|

||||

|

||||

* CLIP(Text Encoder)の最後の層ではなく最後から二番目の層の出力を用いる。

|

||||

* 正方形以外の解像度での学習(Aspect Ratio Bucketing) 。

|

||||

* トークン長を75から225に拡張する。

|

||||

* BLIPによるキャプショニング(キャプションの自動作成)、DeepDanbooruまたはWD14Taggerによる自動タグ付けを行う。

|

||||

* Hypernetworkの学習にも対応する。

|

||||

* Stable Diffusion v2.0(baseおよび768/v)に対応。

|

||||

* VAEの出力をあらかじめ取得しディスクに保存しておくことで、学習の省メモリ化、高速化を図る。

|

||||

|

||||

デフォルトではText Encoderの学習は行いません。モデル全体のfine tuningではU-Netだけを学習するのが一般的なようです(NovelAIもそのようです)。オプション指定でText Encoderも学習対象とできます。

|

||||

|

||||

## 追加機能について

|

||||

### CLIPの出力の変更

|

||||

プロンプトを画像に反映するため、テキストの特徴量への変換を行うのがCLIP(Text Encoder)です。Stable DiffusionではCLIPの最後の層の出力を用いていますが、それを最後から二番目の層の出力を用いるよう変更できます。NovelAIによると、これによりより正確にプロンプトが反映されるようになるとのことです。

|

||||

元のまま、最後の層の出力を用いることも可能です。

|

||||

※Stable Diffusion 2.0では最後から二番目の層をデフォルトで使います。clip_skipオプションを指定しないでください。

|

||||

|

||||

### 正方形以外の解像度での学習

|

||||

Stable Diffusionは512\*512で学習されていますが、それに加えて256\*1024や384\*640といった解像度でも学習します。これによりトリミングされる部分が減り、より正しくプロンプトと画像の関係が学習されることが期待されます。

|

||||

学習解像度はパラメータとして与えられた解像度の面積(=メモリ使用量)を超えない範囲で、64ピクセル単位で縦横に調整、作成されます。

|

||||

|

||||

機械学習では入力サイズをすべて統一するのが一般的ですが、特に制約があるわけではなく、実際は同一のバッチ内で統一されていれば大丈夫です。NovelAIの言うbucketingは、あらかじめ教師データを、アスペクト比に応じた学習解像度ごとに分類しておくことを指しているようです。そしてバッチを各bucket内の画像で作成することで、バッチの画像サイズを統一します。

|

||||

|

||||

### トークン長の75から225への拡張

|

||||

Stable Diffusionでは最大75トークン(開始・終了を含むと77トークン)ですが、それを225トークンまで拡張します。

|

||||

ただしCLIPが受け付ける最大長は75トークンですので、225トークンの場合、単純に三分割してCLIPを呼び出してから結果を連結しています。

|

||||

|

||||

※これが望ましい実装なのかどうかはいまひとつわかりません。とりあえず動いてはいるようです。特に2.0では何も参考になる実装がないので独自に実装してあります。

|

||||

|

||||

※Automatic1111氏のWeb UIではカンマを意識して分割、といったこともしているようですが、私の場合はそこまでしておらず単純な分割です。

|

||||

|

||||

## 環境整備

|

||||

|

||||

このリポジトリの[README](./README-ja.md)を参照してください。

|

||||

|

||||

## 教師データの用意

|

||||

|

||||

学習させたい画像データを用意し、任意のフォルダに入れてください。リサイズ等の事前の準備は必要ありません。

|

||||

ただし学習解像度よりもサイズが小さい画像については、超解像などで品質を保ったまま拡大しておくことをお勧めします。

|

||||

|

||||

複数の教師データフォルダにも対応しています。前処理をそれぞれのフォルダに対して実行する形となります。

|

||||

|

||||

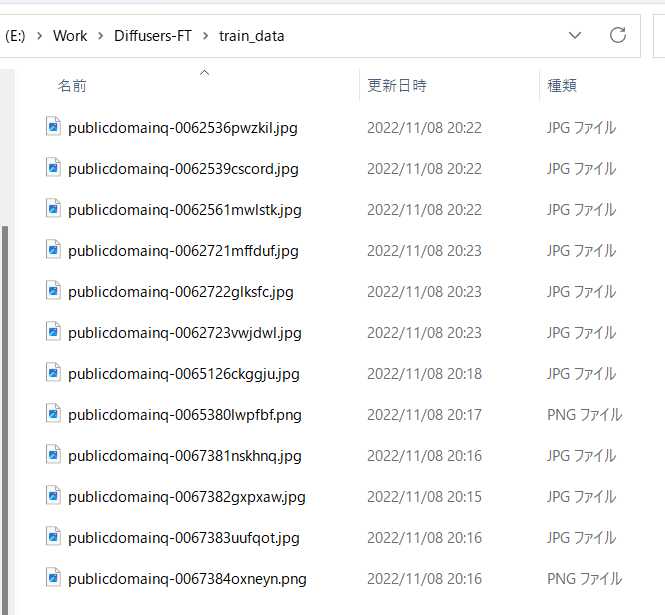

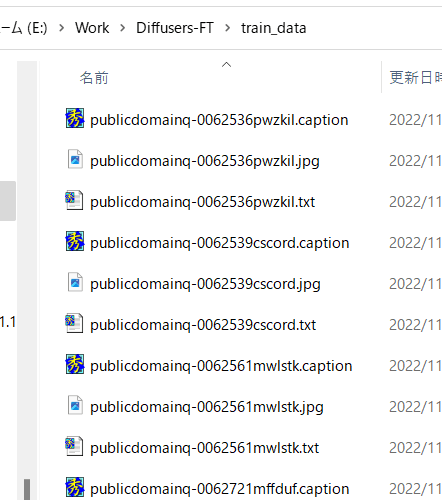

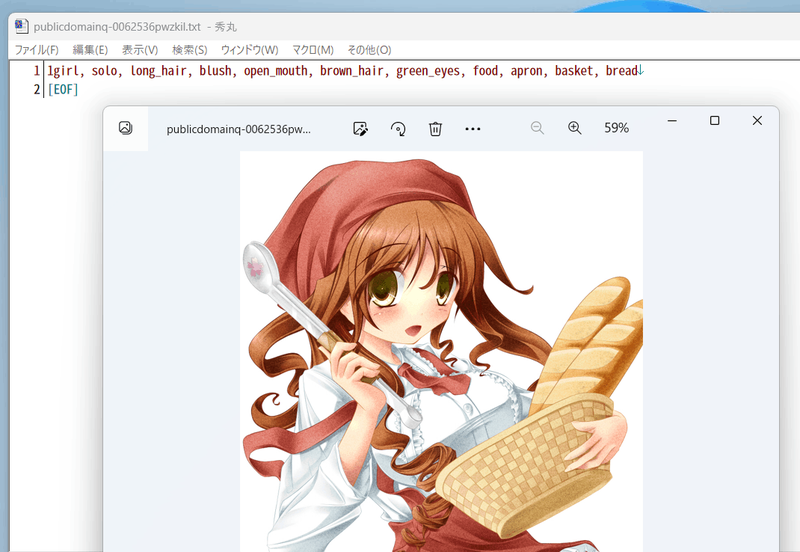

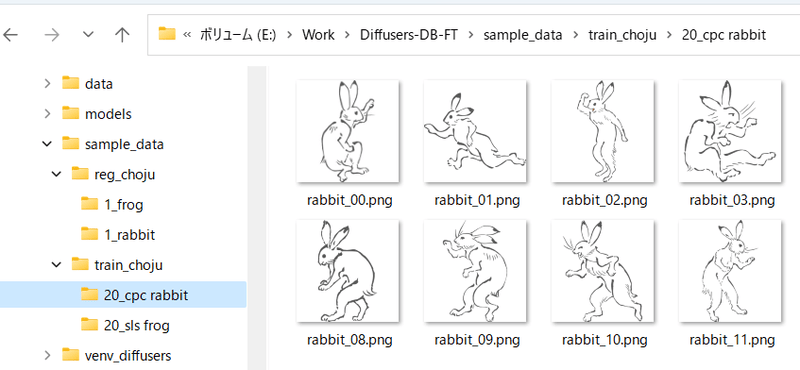

たとえば以下のように画像を格納します。

|

||||

|

||||

|

||||

|

||||

## 自動キャプショニング

|

||||

キャプションを使わずタグだけで学習する場合はスキップしてください。

|

||||

|

||||

また手動でキャプションを用意する場合、キャプションは教師データ画像と同じディレクトリに、同じファイル名、拡張子.caption等で用意してください。各ファイルは1行のみのテキストファイルとします。

|

||||

|

||||

### BLIPによるキャプショニング

|

||||

|

||||

最新版ではBLIPのダウンロード、重みのダウンロード、仮想環境の追加は不要になりました。そのままで動作します。

|

||||

|

||||

finetuneフォルダ内のmake_captions.pyを実行します。

|

||||

|

||||

```

|

||||

python finetune\make_captions.py --batch_size <バッチサイズ> <教師データフォルダ>

|

||||

```

|

||||

|

||||

バッチサイズ8、教師データを親フォルダのtrain_dataに置いた場合、以下のようになります。

|

||||

|

||||

```

|

||||

python finetune\make_captions.py --batch_size 8 ..\train_data

|

||||

```

|

||||

|

||||

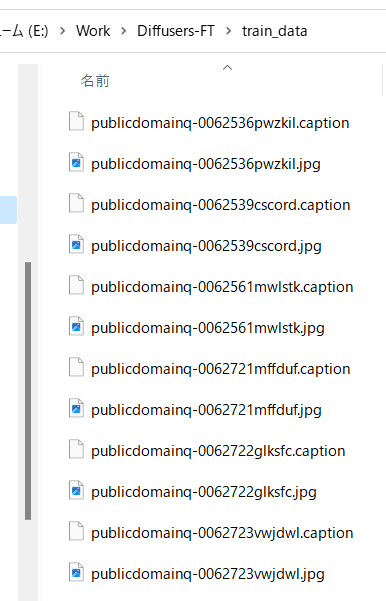

キャプションファイルが教師データ画像と同じディレクトリに、同じファイル名、拡張子.captionで作成されます。

|

||||

|

||||

batch_sizeはGPUのVRAM容量に応じて増減してください。大きいほうが速くなります(VRAM 12GBでももう少し増やせると思います)。

|

||||

max_lengthオプションでキャプションの最大長を指定できます。デフォルトは75です。モデルをトークン長225で学習する場合には長くしても良いかもしれません。

|

||||

caption_extensionオプションでキャプションの拡張子を変更できます。デフォルトは.captionです(.txtにすると後述のDeepDanbooruと競合します)。

|

||||

|

||||

複数の教師データフォルダがある場合には、それぞれのフォルダに対して実行してください。

|

||||

|

||||

なお、推論にランダム性があるため、実行するたびに結果が変わります。固定する場合には--seedオプションで「--seed 42」のように乱数seedを指定してください。

|

||||

|

||||

その他のオプションは--helpでヘルプをご参照ください(パラメータの意味についてはドキュメントがまとまっていないようで、ソースを見るしかないようです)。

|

||||

|

||||

デフォルトでは拡張子.captionでキャプションファイルが生成されます。

|

||||

|

||||

|

||||

|

||||

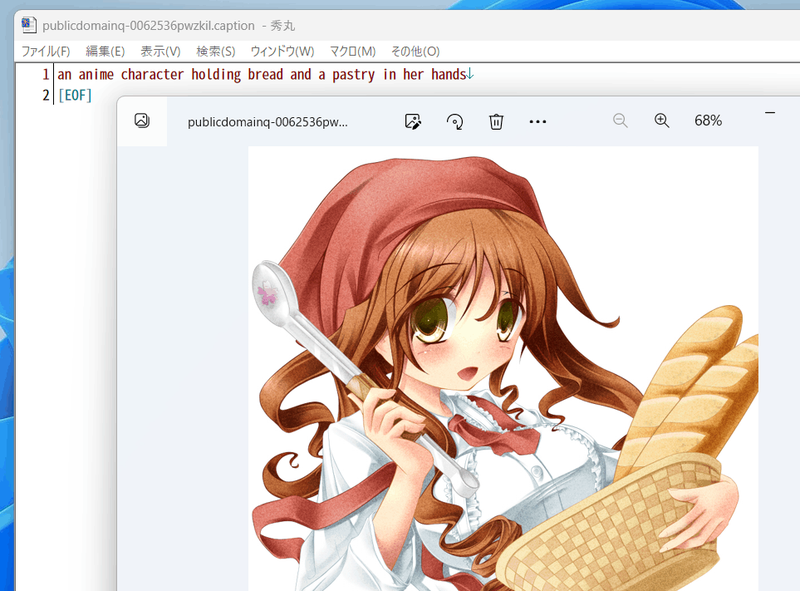

たとえば以下のようなキャプションが付きます。

|

||||

|

||||

|

||||

|

||||

## DeepDanbooruによるタグ付け

|

||||

danbooruタグのタグ付け自体を行わない場合は「キャプションとタグ情報の前処理」に進んでください。

|

||||

|

||||

タグ付けはDeepDanbooruまたはWD14Taggerで行います。WD14Taggerのほうが精度が良いようです。WD14Taggerでタグ付けする場合は、次の章へ進んでください。

|

||||

|

||||

### 環境整備

|

||||

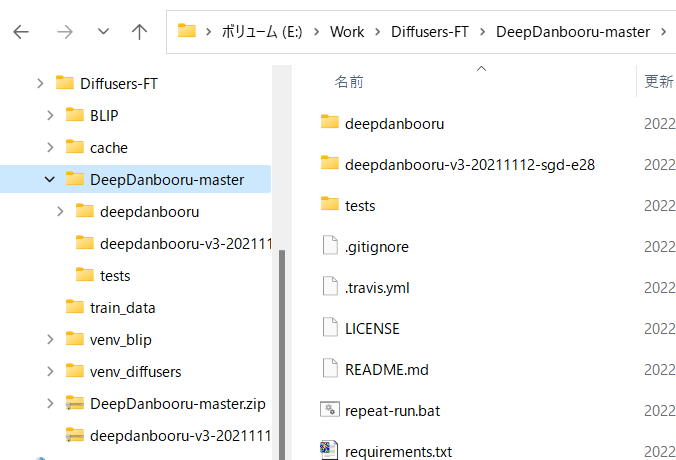

DeepDanbooru https://github.com/KichangKim/DeepDanbooru を作業フォルダにcloneしてくるか、zipをダウンロードして展開します。私はzipで展開しました。

|

||||

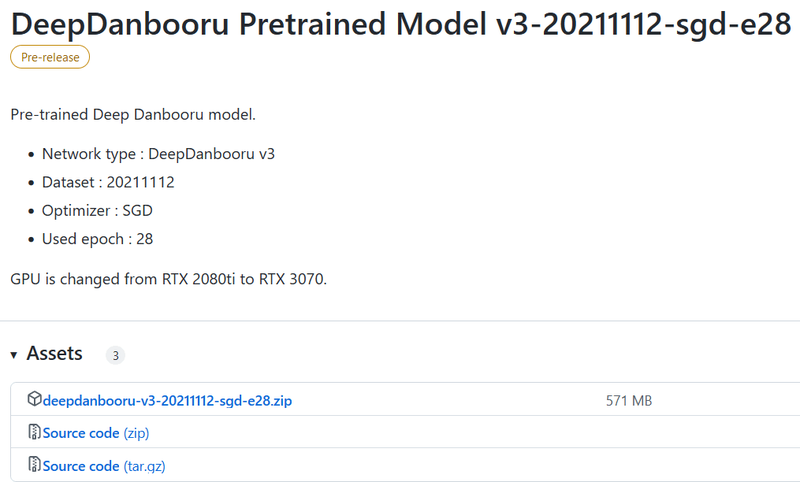

またDeepDanbooruのReleasesのページ https://github.com/KichangKim/DeepDanbooru/releases の「DeepDanbooru Pretrained Model v3-20211112-sgd-e28」のAssetsから、deepdanbooru-v3-20211112-sgd-e28.zipをダウンロードしてきてDeepDanbooruのフォルダに展開します。

|

||||

|

||||

以下からダウンロードします。Assetsをクリックして開き、そこからダウンロードします。

|

||||

|

||||

|

||||

|

||||

以下のようなこういうディレクトリ構造にしてください

|

||||

|

||||

|

||||

|

||||

Diffusersの環境に必要なライブラリをインストールします。DeepDanbooruのフォルダに移動してインストールします(実質的にはtensorflow-ioが追加されるだけだと思います)。

|

||||

|

||||

```

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

続いてDeepDanbooru自体をインストールします。

|

||||

|

||||

```

|

||||

pip install .

|

||||

```

|

||||

|

||||

以上でタグ付けの環境整備は完了です。

|

||||

|

||||

### タグ付けの実施

|

||||

DeepDanbooruのフォルダに移動し、deepdanbooruを実行してタグ付けを行います。

|

||||

|

||||

```

|

||||

deepdanbooru evaluate <教師データフォルダ> --project-path deepdanbooru-v3-20211112-sgd-e28 --allow-folder --save-txt

|

||||

```

|

||||

|

||||

教師データを親フォルダのtrain_dataに置いた場合、以下のようになります。

|

||||

|

||||

```

|

||||

deepdanbooru evaluate ../train_data --project-path deepdanbooru-v3-20211112-sgd-e28 --allow-folder --save-txt

|

||||

```

|

||||

|

||||

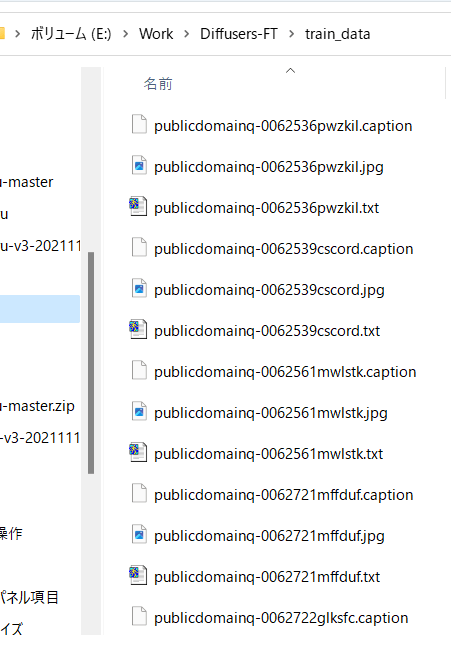

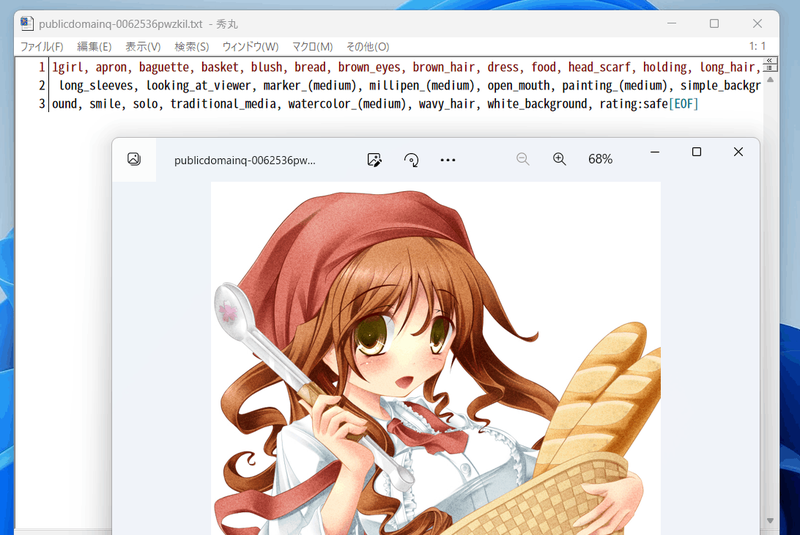

タグファイルが教師データ画像と同じディレクトリに、同じファイル名、拡張子.txtで作成されます。1件ずつ処理されるためわりと遅いです。

|

||||

|

||||

複数の教師データフォルダがある場合には、それぞれのフォルダに対して実行してください。

|

||||

|

||||

以下のように生成されます。

|

||||

|

||||

|

||||

|

||||

こんな感じにタグが付きます(すごい情報量……)。

|

||||

|

||||

|

||||

|

||||

## WD14Taggerによるタグ付け

|

||||

DeepDanbooruの代わりにWD14Taggerを用いる手順です。

|

||||

|

||||

Automatic1111氏のWebUIで使用しているtaggerを利用します。こちらのgithubページ(https://github.com/toriato/stable-diffusion-webui-wd14-tagger#mrsmilingwolfs-model-aka-waifu-diffusion-14-tagger )の情報を参考にさせていただきました。

|

||||

|

||||

最初の環境整備で必要なモジュールはインストール済みです。また重みはHugging Faceから自動的にダウンロードしてきます。

|

||||

|

||||

### タグ付けの実施

|

||||

スクリプトを実行してタグ付けを行います。

|

||||

```

|

||||

python tag_images_by_wd14_tagger.py --batch_size <バッチサイズ> <教師データフォルダ>

|

||||

```

|

||||

|

||||

教師データを親フォルダのtrain_dataに置いた場合、以下のようになります。

|

||||

```

|

||||

python tag_images_by_wd14_tagger.py --batch_size 4 ..\train_data

|

||||

```

|

||||

|

||||

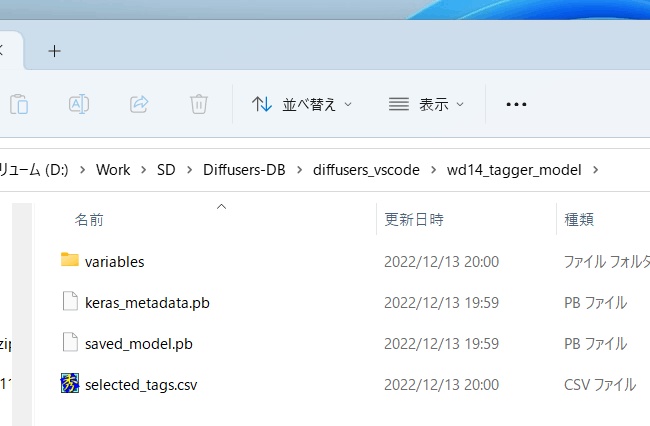

初回起動時にはモデルファイルがwd14_tagger_modelフォルダに自動的にダウンロードされます(フォルダはオプションで変えられます)。以下のようになります。

|

||||

|

||||

|

||||

|

||||

タグファイルが教師データ画像と同じディレクトリに、同じファイル名、拡張子.txtで作成されます。

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

threshオプションで、判定されたタグのconfidence(確信度)がいくつ以上でタグをつけるかが指定できます。デフォルトはWD14Taggerのサンプルと同じ0.35です。値を下げるとより多くのタグが付与されますが、精度は下がります。

|

||||

batch_sizeはGPUのVRAM容量に応じて増減してください。大きいほうが速くなります(VRAM 12GBでももう少し増やせると思います)。caption_extensionオプションでタグファイルの拡張子を変更できます。デフォルトは.txtです。

|

||||

model_dirオプションでモデルの保存先フォルダを指定できます。

|

||||

またforce_downloadオプションを指定すると保存先フォルダがあってもモデルを再ダウンロードします。

|

||||

|

||||

複数の教師データフォルダがある場合には、それぞれのフォルダに対して実行してください。

|

||||

|

||||

## キャプションとタグ情報の前処理

|

||||

|

||||

スクリプトから処理しやすいようにキャプションとタグをメタデータとしてひとつのファイルにまとめます。

|

||||

|

||||

### キャプションの前処理

|

||||

|

||||

キャプションをメタデータに入れるには、作業フォルダ内で以下を実行してください(キャプションを学習に使わない場合は実行不要です)(実際は1行で記述します、以下同様)。

|

||||

|

||||

```

|

||||

python merge_captions_to_metadata.py <教師データフォルダ>

|

||||

--in_json <読み込むメタデータファイル名>

|

||||

<メタデータファイル名>

|

||||

```

|

||||

|

||||

メタデータファイル名は任意の名前です。

|

||||

教師データがtrain_data、読み込むメタデータファイルなし、メタデータファイルがmeta_cap.jsonの場合、以下のようになります。

|

||||

|

||||

```

|

||||

python merge_captions_to_metadata.py train_data meta_cap.json

|

||||

```

|

||||

|

||||

caption_extensionオプションでキャプションの拡張子を指定できます。

|

||||

|

||||

複数の教師データフォルダがある場合には、full_path引数を指定してください(メタデータにフルパスで情報を持つようになります)。そして、それぞれのフォルダに対して実行してください。

|

||||

|

||||

```

|

||||

python merge_captions_to_metadata.py --full_path

|

||||

train_data1 meta_cap1.json

|

||||

python merge_captions_to_metadata.py --full_path --in_json meta_cap1.json

|

||||

train_data2 meta_cap2.json

|

||||

```

|

||||

|

||||

in_jsonを省略すると書き込み先メタデータファイルがあるとそこから読み込み、そこに上書きします。

|

||||

|

||||

__※in_jsonオプションと書き込み先を都度書き換えて、別のメタデータファイルへ書き出すようにすると安全です。__

|

||||

|

||||

### タグの前処理

|

||||

|

||||

同様にタグもメタデータにまとめます(タグを学習に使わない場合は実行不要です)。

|

||||

```

|

||||

python merge_dd_tags_to_metadata.py <教師データフォルダ>

|

||||

--in_json <読み込むメタデータファイル名>

|

||||

<書き込むメタデータファイル名>

|

||||

```

|

||||

|

||||

先と同じディレクトリ構成で、meta_cap.jsonを読み、meta_cap_dd.jsonに書きだす場合、以下となります。

|

||||

```

|

||||

python merge_dd_tags_to_metadata.py train_data --in_json meta_cap.json meta_cap_dd.json

|

||||

```

|

||||

|

||||

複数の教師データフォルダがある場合には、full_path引数を指定してください。そして、それぞれのフォルダに対して実行してください。

|

||||

|

||||

```

|

||||

python merge_dd_tags_to_metadata.py --full_path --in_json meta_cap2.json

|

||||

train_data1 meta_cap_dd1.json

|

||||

python merge_dd_tags_to_metadata.py --full_path --in_json meta_cap_dd1.json

|

||||

train_data2 meta_cap_dd2.json

|

||||

```

|

||||

|

||||

in_jsonを省略すると書き込み先メタデータファイルがあるとそこから読み込み、そこに上書きします。

|

||||

|

||||

__※in_jsonオプションと書き込み先を都度書き換えて、別のメタデータファイルへ書き出すようにすると安全です。__

|

||||

|

||||

### キャプションとタグのクリーニング

|

||||

ここまででメタデータファイルにキャプションとDeepDanbooruのタグがまとめられています。ただ自動キャプショニングにしたキャプションは表記ゆれなどがあり微妙(※)ですし、タグにはアンダースコアが含まれていたりratingが付いていたりしますので(DeepDanbooruの場合)、エディタの置換機能などを用いてキャプションとタグのクリーニングをしたほうがいいでしょう。

|

||||

|

||||

※たとえばアニメ絵の少女を学習する場合、キャプションにはgirl/girls/woman/womenなどのばらつきがあります。また「anime girl」なども単に「girl」としたほうが適切かもしれません。

|

||||

|

||||

クリーニング用のスクリプトが用意してありますので、スクリプトの内容を状況に応じて編集してお使いください。

|

||||

|

||||

(教師データフォルダの指定は不要になりました。メタデータ内の全データをクリーニングします。)

|

||||

|

||||

```

|

||||

python clean_captions_and_tags.py <読み込むメタデータファイル名> <書き込むメタデータファイル名>

|

||||

```

|

||||

|

||||

--in_jsonは付きませんのでご注意ください。たとえば次のようになります。

|

||||

|

||||

```

|

||||

python clean_captions_and_tags.py meta_cap_dd.json meta_clean.json

|

||||

```

|

||||

|

||||

以上でキャプションとタグの前処理は完了です。

|

||||

|

||||

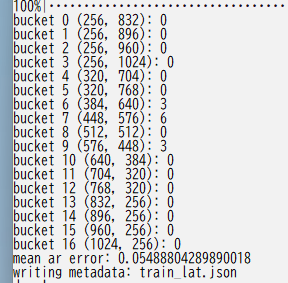

## latentsの事前取得

|

||||

|

||||

学習を高速に進めるためあらかじめ画像の潜在表現を取得しディスクに保存しておきます。あわせてbucketing(教師データをアスペクト比に応じて分類する)を行います。

|

||||

|

||||

作業フォルダで以下のように入力してください。

|

||||

```

|

||||

python prepare_buckets_latents.py <教師データフォルダ>

|

||||

<読み込むメタデータファイル名> <書き込むメタデータファイル名>

|

||||

<fine tuningするモデル名またはcheckpoint>

|

||||

--batch_size <バッチサイズ>

|

||||

--max_resolution <解像度 幅,高さ>

|

||||

--mixed_precision <精度>

|

||||

```

|

||||

|

||||

モデルがmodel.ckpt、バッチサイズ4、学習解像度は512\*512、精度no(float32)で、meta_clean.jsonからメタデータを読み込み、meta_lat.jsonに書き込む場合、以下のようになります。

|

||||

|

||||

```

|

||||

python prepare_buckets_latents.py

|

||||

train_data meta_clean.json meta_lat.json model.ckpt

|

||||

--batch_size 4 --max_resolution 512,512 --mixed_precision no

|

||||

```

|

||||

|

||||

教師データフォルダにnumpyのnpz形式でlatentsが保存されます。

|

||||

|

||||

Stable Diffusion 2.0のモデルを読み込む場合は--v2オプションを指定してください(--v_parameterizationは不要です)。

|

||||

|

||||

解像度の最小サイズを--min_bucket_resoオプションで、最大サイズを--max_bucket_resoで指定できます。デフォルトはそれぞれ256、1024です。たとえば最小サイズに384を指定すると、256\*1024や320\*768などの解像度は使わなくなります。

|

||||

解像度を768\*768のように大きくした場合、最大サイズに1280などを指定すると良いでしょう。

|

||||

|

||||

--flip_augオプションを指定すると左右反転のaugmentation(データ拡張)を行います。疑似的にデータ量を二倍に増やすことができますが、データが左右対称でない場合に指定すると(例えばキャラクタの外見、髪型など)学習がうまく行かなくなります。

|

||||

(反転した画像についてもlatentsを取得し、\*\_flip.npzファイルを保存する単純な実装です。fline_tune.pyには特にオプション指定は必要ありません。\_flip付きのファイルがある場合、flip付き・なしのファイルを、ランダムに読み込みます。)

|

||||

|

||||

バッチサイズはVRAM 12GBでももう少し増やせるかもしれません。

|

||||

解像度は64で割り切れる数字で、"幅,高さ"で指定します。解像度はfine tuning時のメモリサイズに直結します。VRAM 12GBでは512,512が限界と思われます(※)。16GBなら512,704や512,768まで上げられるかもしれません。なお256,256等にしてもVRAM 8GBでは厳しいようです(パラメータやoptimizerなどは解像度に関係せず一定のメモリが必要なため)。

|

||||

|

||||

※batch size 1の学習で12GB VRAM、640,640で動いたとの報告もありました。

|

||||

|

||||

以下のようにbucketingの結果が表示されます。

|

||||

|

||||

|

||||

|

||||

複数の教師データフォルダがある場合には、full_path引数を指定してください。そして、それぞれのフォルダに対して実行してください。

|

||||

```

|

||||

python prepare_buckets_latents.py --full_path

|

||||

train_data1 meta_clean.json meta_lat1.json model.ckpt

|

||||

--batch_size 4 --max_resolution 512,512 --mixed_precision no

|

||||

|

||||

python prepare_buckets_latents.py --full_path

|

||||

train_data2 meta_lat1.json meta_lat2.json model.ckpt

|

||||

--batch_size 4 --max_resolution 512,512 --mixed_precision no

|

||||

|

||||

```

|

||||

読み込み元と書き込み先を同じにすることも可能ですが別々の方が安全です。

|

||||

|

||||

__※引数を都度書き換えて、別のメタデータファイルに書き込むと安全です。__

|

||||

|

||||

|

||||

## 学習の実行

|

||||

たとえば以下のように実行します。以下は省メモリ化のための設定です。

|

||||

```

|

||||

accelerate launch --num_cpu_threads_per_process 1 fine_tune.py

|

||||

--pretrained_model_name_or_path=model.ckpt

|

||||

--in_json meta_lat.json

|

||||

--train_data_dir=train_data

|

||||

--output_dir=fine_tuned

|

||||

--shuffle_caption

|

||||

--train_batch_size=1 --learning_rate=5e-6 --max_train_steps=10000

|

||||

--use_8bit_adam --xformers --gradient_checkpointing

|

||||

--mixed_precision=bf16

|

||||

--save_every_n_epochs=4

|

||||

```

|

||||

|

||||

accelerateのnum_cpu_threads_per_processには通常は1を指定するとよいようです。

|

||||

|

||||

pretrained_model_name_or_pathに学習対象のモデルを指定します(Stable DiffusionのcheckpointかDiffusersのモデル)。Stable Diffusionのcheckpointは.ckptと.safetensorsに対応しています(拡張子で自動判定)。

|

||||

|

||||

in_jsonにlatentをキャッシュしたときのメタデータファイルを指定します。

|

||||

|

||||

train_data_dirに教師データのフォルダを、output_dirに学習後のモデルの出力先フォルダを指定します。

|

||||

|

||||

shuffle_captionを指定すると、キャプション、タグをカンマ区切りされた単位でシャッフルして学習します(Waifu Diffusion v1.3で行っている手法です)。

|

||||

(先頭のトークンのいくつかをシャッフルせずに固定できます。その他のオプションのkeep_tokensをご覧ください。)

|

||||

|

||||

train_batch_sizeにバッチサイズを指定します。VRAM 12GBでは1か2程度を指定してください。解像度によっても指定可能な数は変わってきます。

|

||||

学習に使用される実際のデータ量は「バッチサイズ×ステップ数」です。バッチサイズを増やした時には、それに応じてステップ数を下げることが可能です。

|

||||

|

||||

learning_rateに学習率を指定します。たとえばWaifu Diffusion v1.3は5e-6のようです。

|

||||

max_train_stepsにステップ数を指定します。

|

||||

|

||||

use_8bit_adamを指定すると8-bit Adam Optimizerを使用します。省メモリ化、高速化されますが精度は下がる可能性があります。

|

||||

|

||||

xformersを指定するとCrossAttentionを置換して省メモリ化、高速化します。

|

||||

※11/9時点ではfloat32の学習ではxformersがエラーになるため、bf16/fp16を使うか、代わりにmem_eff_attnを指定して省メモリ版CrossAttentionを使ってください(速度はxformersに劣ります)。

|

||||

|

||||

gradient_checkpointingで勾配の途中保存を有効にします。速度は遅くなりますが使用メモリ量が減ります。

|

||||

|

||||

mixed_precisionで混合精度を使うか否かを指定します。"fp16"または"bf16"を指定すると省メモリになりますが精度は劣ります。

|

||||

"fp16"と"bf16"は使用メモリ量はほぼ同じで、bf16の方が学習結果は良くなるとの話もあります(試した範囲ではあまり違いは感じられませんでした)。

|

||||

"no"を指定すると使用しません(float32になります)。

|

||||

|

||||

※bf16で学習したcheckpointをAUTOMATIC1111氏のWeb UIで読み込むとエラーになるようです。これはデータ型のbfloat16がWeb UIのモデルsafety checkerでエラーとなるためのようです。save_precisionオプションを指定してfp16またはfloat32形式で保存してください。またはsafetensors形式で保管しても良さそうです。

|

||||

|

||||

save_every_n_epochsを指定するとそのエポックだけ経過するたびに学習中のモデルを保存します。

|

||||

|

||||

### Stable Diffusion 2.0対応

|

||||

Hugging Faceのstable-diffusion-2-baseを使う場合は--v2オプションを、stable-diffusion-2または768-v-ema.ckptを使う場合は--v2と--v_parameterizationの両方のオプションを指定してください。

|

||||

|

||||

### メモリに余裕がある場合に精度や速度を上げる

|

||||

まずgradient_checkpointingを外すと速度が上がります。ただし設定できるバッチサイズが減りますので、精度と速度のバランスを見ながら設定してください。

|

||||

|

||||

バッチサイズを増やすと速度、精度が上がります。メモリが足りる範囲で、1データ当たりの速度を確認しながら増やしてください(メモリがぎりぎりになるとかえって速度が落ちることがあります)。

|

||||

|

||||

### 使用するCLIP出力の変更

|

||||

clip_skipオプションに2を指定すると、後ろから二番目の層の出力を用います。1またはオプション省略時は最後の層を用います。

|

||||

学習したモデルはAutomatic1111氏のWeb UIで推論できるはずです。

|

||||

|

||||